Introduction to Lidar

What Is Lidar?

Lidar, which stands for Light Detection and Ranging, is a method of 3-D laser scanning.

Lidar sensors provide 3-D structural information about an environment. Advanced driving assistance systems (ADAS), robots, and unmanned aerial vehicles (UAVs) employ lidar sensors for accurate 3-D perception, navigation, and mapping.

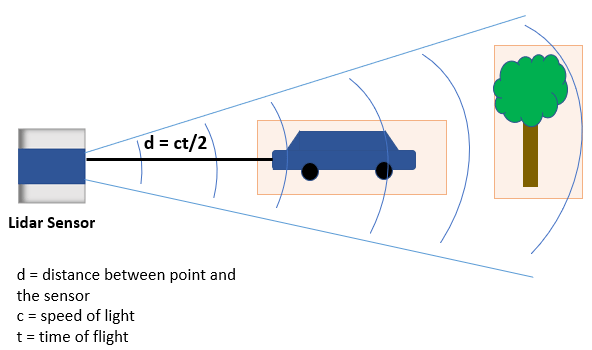

Lidar is an active remote sensing system that uses laser light to measure the distance of the sensor from objects in a scene. A lidar sensor emits laser pulses that reflect off of surrounding objects. The sensor then captures this reflected light and uses the time-of-flight principle to measure its distance from objects, enabling it to perceive the structure of its surroundings.

A lidar sensor stores these reflected laser pulses, or laser returns, as a collection of points. This collection of points is called a point cloud.

What Is a Point Cloud?

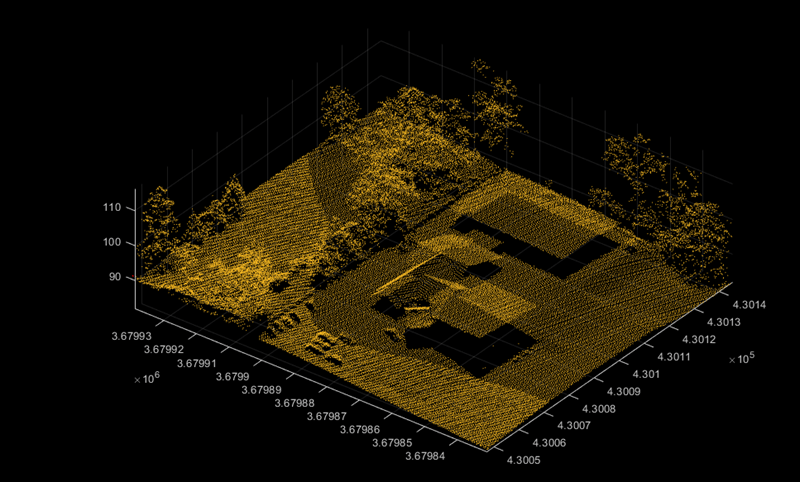

A point cloud is a collection of 3-D points in space. Just as an image is the output of a camera, a point cloud is the output of a lidar sensor.

A lidar sensor captures attributes such as the location in

xyz-coordinates, the intensity of the laser light, and the surface normal

at each point of a point cloud. With this information a point cloud generates a 3-D map of

an environment. You can store and process the information from a point cloud in MATLAB® by using a pointCloud object.

Point clouds can be either unorganized or organized. In an unorganized point cloud, the points are stored as a single stream of 3-D coordinates. In an organized point cloud, the points are arranged into rows and columns based on spatial relation between them. For more information about organized and unorganized point clouds, see What Are Organized and Unorganized Point Clouds?

Types of Lidar

You can broadly divide the various types of lidar sensors based on whether they are for airborne or terrestrial application.

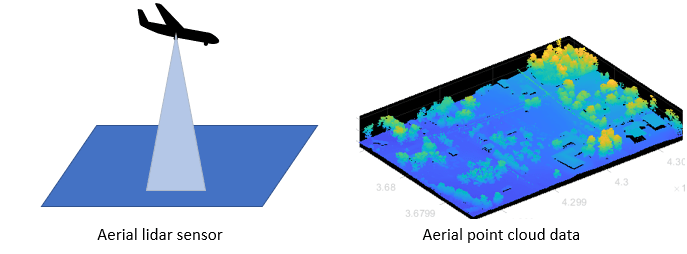

Airborne or Aerial Lidar

Airborne lidar sensors are those attached to helicopters, aircraft, or UAVs. They consist of topographic and bathymetric sensors.

Topographic sensors help in monitoring and mapping the topography of a region. Applications include urban planning, landscape ecology, forest planning and mapping.

Bathymetric sensors estimate the depth of water bodies. These sensors have an additional green laser that travels through a water column. Applications include coastline management, and oceanography.

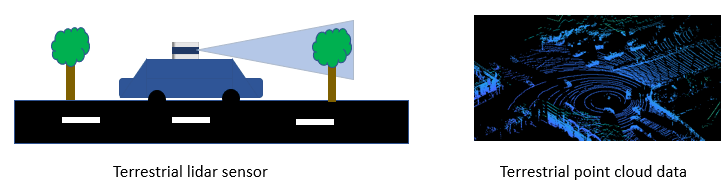

Terrestrial Lidar

Terrestrial lidar sensors scan the surface of the Earth or the immediate surroundings of the sensor on land. These sensors can be static or mobile.

Static sensors collect point clouds from a fixed location. Applications such as mining, archaeology, smartphones, and architecture use static sensors.

Mobile sensors are most commonly used in autonomous driving systems, and are mounted on vehicles. Other applications include robotics, transport planning, and mapping.

Advantages of Lidar Technology

Lidar sensors are useful when you need to take accurate measurements at longer distances and higher resolutions than is possible with radar sensors, or in environmental or lighting conditions that would negatively affect a camera. Lidar scans are also natively 3-D, and do not require additional software to add depth.

You can use lidar sensors to detect small details, scan dense environments, and collect data at night or in inclement weather, all with high speed.

Lidar Processing Overview

I/O and Supported Hardware

Because the wide variety of lidar sensors available from companies such as Velodyne®, Ouster®, Hesai®, and Ibeo® use a variety of formats for point cloud data, Lidar Toolbox™ provides tools to import and export point clouds using various file formats. Lidar Toolbox currently supports reading data from the PLY, PCAP, PCD, LAS, LAZ, and Ibeo data container (IDC) sensor formats. You can also write point cloud data to the PLY, PCD, and LAS formats. For more information on file input and output, see Import, Export, and Visualization. You can also stream live data from Velodyne and Ouster sensors. For more details on streaming live data, see Lidar Data Acquisition and Sensor Simulation.

Preprocessing

Lidar Toolbox enables you perform preprocessing operations such as downsampling, denoising, and cropping on your point cloud data. To learn more about visualizing and preprocessing point clouds, see Get Started with Point Cloud Analyzer.

Labeling, Segmentation and Detection

Labeling objects in a point cloud helps you organize and analyze ground truth data for object detection and segmentation. To learn more about lidar labeling, see Get Started with the Lidar Labeler.

Many applications for lidar processing rely on deep learning algorithms to segment, detect, track, and analyze objects of interest in a point cloud. To learn more about point cloud processing using deep learning, see Getting Started with Point Clouds Using Deep Learning.

Calibration and Sensor Fusion

Most modern sensing systems use sensor suites that contain multiple sensors. To obtain meaningful information from multiple sensors, you must first calibrate these sensors. Calibration is the process of aligning the coordinate systems of multiple sensors through rotational and translational transformations. For more information about coordinate systems, see Coordinate Systems in Lidar Toolbox.

Lidar Toolbox provides various tools for calibration and sensor fusion. Many applications involve capturing the same scene using both a lidar sensor and a camera. To construct an accurate 3-D scene, you must fuse the data from these sensors by first calibrating them to one another. For more information on lidar-camera fusion, see What Is Lidar-Camera Calibration? and Get Started with Lidar Camera Calibrator.

Navigation and Mapping

Mapping is the process of building a map of the environment around an autonomous system. You can use tools in Lidar Toolbox to perform simultaneous localization and mapping (SLAM), which is the process of calculating the position and orientation of the system, with respect to its surroundings, while simultaneously mapping its environment. For more information, see Implement Point Cloud SLAM in MATLAB.

Applications of Lidar Technology

Lidar Toolbox provides many tools for typical workflows in different applications of lidar processing.

Advanced Driving Assistance Systems — You can detect cars, trucks, and other objects using the lidar sensors mounted on moving vehicles. You can semantically segment these point clouds to detect and track objects as they move. To learn more about vehicle detection and tracking using Lidar Toolbox, see the Detect, Classify, and Track Vehicles Using Lidar example.

Remote Sensing — Airborne lidar sensors can generate point clouds that provide information about the vegetation cover in an area. To learn more about remote sensing using Lidar Toolbox, see the Extract Individual Tree Attributes and Forest Metrics from Aerial Lidar Data example.

Navigation and Mapping — You can build a map using the lidar data generated from a vehicle-mounted lidar sensor. You can use these maps for localization and navigation. To learn more about map building, see the Feature-Based Map Building from Lidar Data example.