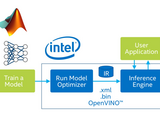

MATLAB to OpenVINO (Intel-Inteference)

Overview :

If you train your deep learning network in MATLAB, you may use OpenVINO to accelerate your solutions in Intel®-based accelerators (CPUs, GPUs, FPGAs, and VPUs) . However, this script don't compare OpenVINO and MATLAB's deployment Option (MATLAB Coder, HDL coder), instead, it will only give you the rough idea how to complete it (MATLAB>OpenVINO) in technical perspective.

Refers to the the link below to understand OpenVINO:

https://software.intel.com/en-us/openvino-toolkit

Highlights :

Deep Learning and Prediction

How to export deep learning model to ONNX format

How to deploy a simple classification application in OpenvinoR4 (Third-party software)

Product Focus :

MATLAB

Deep Learning Toolbox

Openvino R4 (Third-party Software)

Written at 28 January 2018

Citation pour cette source

Kevin Chng (2024). MATLAB to OpenVINO (Intel-Inteference) (https://www.mathworks.com/matlabcentral/fileexchange/70330-matlab-to-openvino-intel-inteference), MATLAB Central File Exchange. Extrait(e) le .

Compatibilité avec les versions de MATLAB

Plateformes compatibles

Windows macOS LinuxCatégories

Tags

Community Treasure Hunt

Find the treasures in MATLAB Central and discover how the community can help you!

Start Hunting!Découvrir Live Editor

Créez des scripts avec du code, des résultats et du texte formaté dans un même document exécutable.

MATLAB_OPENVINO(Upload)

| Version | Publié le | Notes de version | |

|---|---|---|---|

| 1.0.0 |