getCritic

Extract critic from reinforcement learning agent

Description

Examples

Assume that you have an existing trained reinforcement learning agent. For this example, load the trained agent from Compare DDPG Agent to LQR Controller.

load("DoubleIntegDDPG.mat","agent")

Obtain the critic from the agent.

critic = getCritic(agent);

For approximator objects, you can access the Learnables property using dot notation.

First, display the parameters.

critic.Learnables{1}ans = 1×6 single dlarray -5.0017 -1.5513 -0.3424 -0.1116 -0.0506 -0.0047

Modify the parameter values. For this example, simply multiply all of the parameters by 2.

critic.Learnables{1} = critic.Learnables{1}*2;Display the new parameters.

critic.Learnables{1}ans = 1×6 single dlarray -10.0034 -3.1026 -0.6848 -0.2232 -0.1011 -0.0094

Alternatively, you can use getLearnableParameters and setLearnableParameters.

First, obtain the learnable parameters from the critic.

params = getLearnableParameters(critic)

params=2×1 cell array

{[-10.0034 -3.1026 -0.6848 -0.2232 -0.1011 -0.0094]}

{[ 0]}

Modify the parameter values. For this example, simply divide all of the parameters by 2.

modifiedParams = cellfun(@(x) x/2,params,"UniformOutput",false);Set the parameter values of the critic to the new modified values.

critic = setLearnableParameters(critic,modifiedParams);

Set the critic in the agent to the new modified critic.

setCritic(agent,critic);

Display the new parameter values.

getLearnableParameters(getCritic(agent))

ans=2×1 cell array

{[-5.0017 -1.5513 -0.3424 -0.1116 -0.0506 -0.0047]}

{[ 0]}

Create an environment with a continuous action space and obtain its observation and action specifications. For this example, load the environment used in the example Compare DDPG Agent to LQR Controller.

Load the predefined environment.

env = rlPredefinedEnv("DoubleIntegrator-Continuous");Obtain observation and action specifications.

obsInfo = getObservationInfo(env); actInfo = getActionInfo(env);

Create a PPO agent from the environment observation and action specifications. This agent uses default deep neural networks for its actor and critic.

agent = rlPPOAgent(obsInfo,actInfo);

To modify the deep neural networks within a reinforcement learning agent, you must first extract the actor and critic function approximators.

actor = getActor(agent); critic = getCritic(agent);

Extract the deep neural networks from both the actor and critic function approximators.

actorNet = getModel(actor); criticNet = getModel(critic);

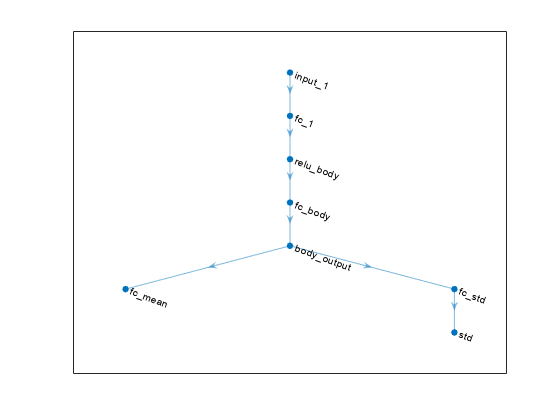

Plot the actor network.

plot(actorNet)

To validate a network, use analyzeNetwork. For example, validate the critic network.

analyzeNetwork(criticNet)

You can modify the actor and critic networks and save them back to the agent. To modify the networks, you can use the Deep Network Designer app. To open the app for each network, use the following commands.

deepNetworkDesigner(criticNet) deepNetworkDesigner(actorNet)

In Deep Network Designer, modify the networks. For example, you can add additional layers to your network. When you modify the networks, do not change the input and output layers of the networks returned by getModel. For more information on building networks, see Build Networks with Deep Network Designer.

To validate the modified network in Deep Network Designer, you must click on Analyze, under the Analysis section. To export the modified network structures to the MATLAB® workspace, generate code for creating the new networks and run this code from the command line. Do not use the exporting option in Deep Network Designer. For an example that shows how to generate and run code, see Create DQN Agent Using Deep Network Designer and Train Using Image Observations.

For this example, the code for creating the modified actor and critic networks is in the createModifiedNetworks helper script.

createModifiedNetworks

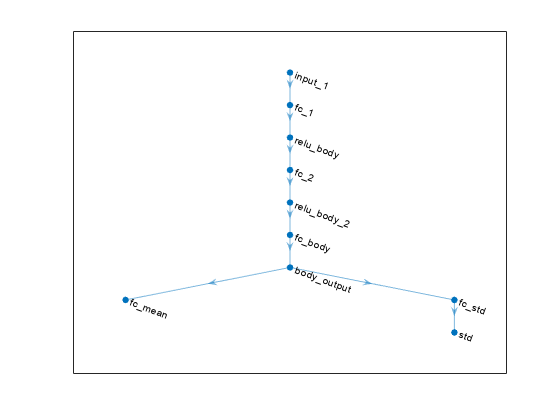

Each of the modified networks includes an additional fullyConnectedLayer and reluLayer in their main common path. Plot the modified actor network.

plot(modifiedActorNet)

After exporting the networks, insert the networks into the actor and critic function approximators.

actor = setModel(actor,modifiedActorNet); critic = setModel(critic,modifiedCriticNet);

Finally, insert the modified actor and critic function approximators into the actor and critic objects.

agent = setActor(agent,actor); agent = setCritic(agent,critic);

Input Arguments

Reinforcement learning agent that contains a critic, specified as one of the following objects:

rlPGAgent(when using a critic to estimate a baseline value function)

Note

For agents with more than one critic, such as TD3 and SAC agents, you must call

getModel for each critic representation individually. You

cannot call getModel for the array returned by

getCritic.

critics = getCritic(myTD3Agent); criticNet1 = getModel(critics(1)); criticNet2 = getModel(critics(2));

Note

agent is a handle object. For more information about handle

objects, see Handle Object Behavior.

For more information on reinforcement learning agents, see Reinforcement Learning Agents.

Example: agent = rlPPOAgent(rlNumericSpec([2 1]),rlNumericSpec([1

1])) creates the default rlPPOAgent object

agent.

Output Arguments

Critic object, returned as one of the following:

rlValueFunctionobject — Returned whenagentis anrlACAgent,rlPGAgent, orrlPPOAgentobject.rlQValueFunctionobject — Returned whenagentis anrlQAgent,rlSARSAAgent,rlDQNAgent,rlDDPGAgent, orrlTD3Agentobject with a single critic.rlVectorQValueFunctionobject — Returned whenagentis anrlQAgent,rlSARSAAgent,rlDQNAgentwith a vector Q-value function critic, or anrlSACAgentwith a hybrid action space.Two-element row vector of

rlQValueFunctionobjects — Returned whenagentis anrlTD3AgentorrlSACAgentobject with a continuous action space and two critics.Two-element row vector of

rlVectorQValueFunctionobjects — Returned whenagentis anrlSACAgentor object with a noncontinuous action space and two critics.

Version History

Introduced in R2019a

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Sélectionner un site web

Choisissez un site web pour accéder au contenu traduit dans votre langue (lorsqu'il est disponible) et voir les événements et les offres locales. D’après votre position, nous vous recommandons de sélectionner la région suivante : .

Vous pouvez également sélectionner un site web dans la liste suivante :

Comment optimiser les performances du site

Pour optimiser les performances du site, sélectionnez la région Chine (en chinois ou en anglais). Les sites de MathWorks pour les autres pays ne sont pas optimisés pour les visites provenant de votre région.

Amériques

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)